Andrew Maynard, Professor of Advanced Technology Transitions, Arizona State University

Sean Dudley, Chief Research Information Officer and Associate Vice President for Research Technology, Arizona State University

Twenty years ago, nanotechnology was the artificial intelligence of its time. The specific details of these technologies are, of course, a world apart. But the challenges of ensuring each technology’s responsible and beneficial development are surprisingly alike. Nanotechnology, which is technologies at the scale of individual atoms and molecules, even carried its own existential risk in the form of “gray goo.”

As potentially transformative AI-based technologies continue to emerge and gain traction, though, it is not clear that people in the artificial intelligence field are applying the lessons learned from nanotechnology.

As scholars of the future of innovation, we explore these parallels in a new commentary in the journal Nature Nanotechnology. The commentary also looks at how a lack of engagement with a diverse community of experts and stakeholders threatens AI’s long-term success.

Nanotech excitement and fear

In the late 1990s and early 2000s, nanotechnology transitioned from a radical and somewhat fringe idea to mainstream acceptance. The U.S. government and other administrations around the world ramped up investment in what was claimed to be “the next industrial revolution.” Government experts made compelling arguments for how, in the words of a foundational report from the U.S. National Science and Technology Council, “shaping the world atom by atom” would positively transform economies, the environment and lives.

But there was a problem. On the heels of public pushback against genetically modified crops, together with lessons learned from recombinant DNA and the Human Genome Project, people in the nanotechnology field had growing concerns that there could be a similar backlash against nanotechnology if it were handled poorly.

These concerns were well grounded. In the early days of nanotechnology, nonprofit organizations such as the ETC Group, Friends of the Earth and others strenuously objected to claims that this type of technology was safe, that there would be minimal downsides and that experts and developers knew what they were doing. The era saw public protests against nanotechnology and – disturbingly – even a bombing campaign by environmental extremists that targeted nanotechnology researchers.

Just as with AI today, there were concerns about the effect on jobs as a new wave of skills and automation swept away established career paths. Also foreshadowing current AI concerns, worries about existential risks began to emerge, notably the possibility of self-replicating “nanobots” converting all matter on Earth into copies of themselves, resulting in a planet-encompassing “gray goo.” This particular scenario was even highlighted by Sun Microsystems co-founder Bill Joy in a prominent article in Wired magazine.

Many of the potential risks associated with nanotechnology, though, were less speculative. Just as there’s a growing focus on more immediate risks associated with AI in the present, the early 2000s saw an emphasis on examining tangible challenges related to ensuring the safe and responsible development of nanotechnology. These included potential health and environmental impacts, social and ethical issues, regulation and governance, and a growing need for public and stakeholder collaboration.

The result was a profoundly complex landscape around nanotechnology development that promised incredible advances yet was rife with uncertainty and the risk of losing public trust if things went wrong.

How nanotech got it right

One of us – Andrew Maynard – was at the forefront of addressing the potential risks of nanotechnology in the early 2000s as a researcher, co-chair of the interagency Nanotechnology Environmental and Health Implications working group and chief science adviser to the Woodrow Wilson International Center for Scholars Project on Emerging Technology.

At the time, working on responsible nanotechnology development felt like playing whack-a-mole with the health, environment, social and governance challenges presented by the technology. For every solution, there seemed to be a new problem.

Yet, through engaging with a wide array of experts and stakeholders – many of whom were not authorities on nanotechnology but who brought critical perspectives and insights to the table – the field produced initiatives that laid the foundation for nanotechnology to thrive. This included multistakeholder partnerships, consensus standards, and initiatives spearheaded by global bodies such as the Organization for Economic Cooperation and Development.

As a result, many of the technologies people rely on today are underpinned by advances in nanoscale science and engineering. Even some of the advances in AI rely on nanotechnology-based hardware.

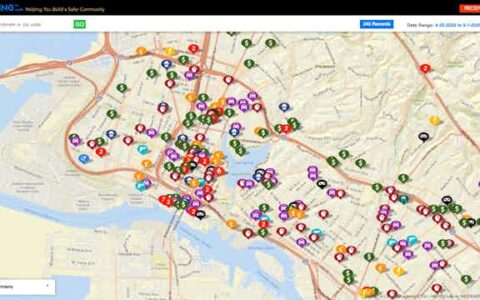

In the U.S., much of this collaborative work was spearheaded by the cross-agency National Nanotechnology Initiative. In the early 2000s, the initiative brought together representatives from across the government to better understand the risks and benefits of nanotechnology. It helped convene a broad and diverse array of scholars, researchers, developers, practitioners, educators, activists, policymakers and other stakeholders to help map out strategies for ensuring socially and economically beneficial nanoscale technologies.

In 2003, the 21st Century Nanotechnology Research and Development Act became law and further codified this commitment to participation by a broad array of stakeholders. The coming years saw a growing number of federally funded initiatives – including the Center for Nanotechnology and Society at Arizona State University (where one of us was on the board of visitors) – that cemented the principle of broad engagement around emerging advanced technologies.

Experts only at the table

These and similar efforts around the world were pivotal in ensuring the emergence of beneficial and responsible nanotechnology. Yet despite similar aspirations around AI, these same levels of diversity and engagement are missing. AI development practiced today is, by comparison, much more exclusionary. The White House has prioritized consultations with AI company CEOs, and Senate hearings have drawn preferentially on technical experts.

According to lessons learned from nanotechnology, we believe this approach is a mistake. While members of the public, policymakers and experts outside the domain of AI may not fully understand the intimate details of the technology, they are often fully capable of understanding its implications. More importantly, they bring a diversity of expertise and perspectives to the table that is essential for the successful development of an advanced technology like AI.

This is why, in our Nature Nanotechnology commentary, we recommend learning from the lessons of nanotechnology, engaging early and often with experts and stakeholders who may not know the technical details and science behind AI but nevertheless bring knowledge and insights essential for ensuring the technology’s appropriate success.

The clock is ticking

Artificial intelligence could be the most transformative technology that’s come along in living memory. Developed smartly, it could positively change the lives of billions of people. But this will happen only if society applies the lessons from past advanced technology transitions like the one driven by nanotechnology.

As with the formative years of nanotechnology, addressing the challenges of AI is urgent. The early days of an advanced technology transition set the trajectory for how it plays out over the coming decades. And with the recent pace of progress of AI, this window is closing fast.

It is not just the future of AI that’s at stake. Artificial intelligence is only one of many transformative emerging technologies. Quantum technologies, advanced genetic manipulation, neurotechnologies and more are coming fast. If society doesn’t learn from the past to successfully navigate these imminent transitions, it risks losing out on the promises they hold and faces the possibility of each causing more harm than good.